I decided to learn more about local, lightweight large language models last week. I’ve been interested in them for a while. I specifically tried Gemma 3 270M, a little LLM with 270 million parameters that can run on cellphones, computers, and even Raspberry Pi clusters.

I wanted to know if a model this small can genuinely give good AI answers. Can it do things like summarizing, coding, or creative writing without using up my device’s battery? And most crucially, can it perform all of this without connecting to the internet?

This is my honest, hands-on review of the Gemma 3 270M.

Setting up and making plans

I ran Gemma 3 270M in a few different settings to see how well it worked in different situations:

- Pixel 9 Pro smartphone-testing battery life and mobile efficiency.

- Old laptop from 2019 to check if it could keep up with newer hardware.

- Raspberry Pi cluster to test how well edge devices work.

I thought it would be speedy and light before I started, but I wasn’t sure how well it would handle extensive text inputs, jobs that required following instructions, and prompts that were particular to a certain field. I also wanted to see if quantized variants, like INT4, would make the quality noticeably worse.

Step-by-Step Experience

1. First launch and setup

It was very easy to set up Gemma 3 270M. It started up in seconds on the laptop. The mobile setup went just as smoothly. I used Olama on both my desktop and my transformer. JavaScript for testing offline in browsers. It was great for privacy that there was no need for an internet connection.

The main interface I used to run Gemma 3 270M – simple, lightweight, and ready for offline execution.

2. Testing the creation of text

I told it to write a short story based on a random prompt. Even though the model was modest, the story made sense and had a clear framework. The answers made sense, even on my aging laptop. When the prompts were extensive, I saw very little repetition, but overall, it was impressive for a model with 270 million parameters.

Then, I tested the summary. I gave it a 2,000-word article about AI in construction chemicals and asked it to make a short synopsis. It made a summary that was easy to read and got all the important points. There was very little delay, even on a phone.

3. Following instructions and doing coding tasks

One of the biggest shocks was how well Gemma 3 270M followed directions. I tried prompts with more than one step:

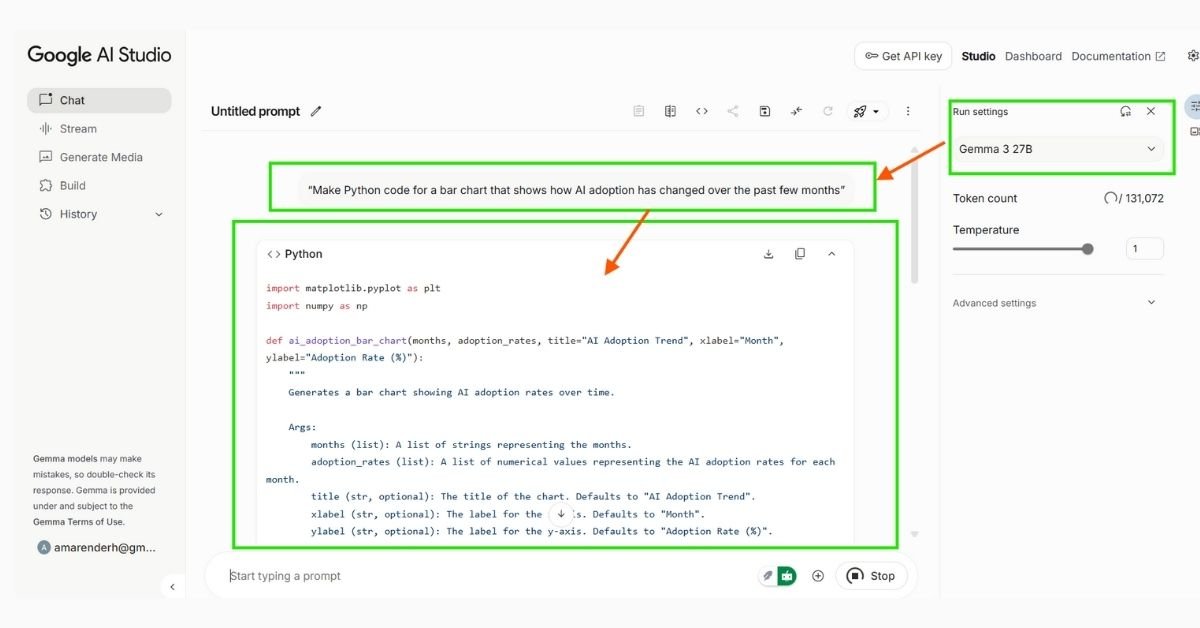

“Make Python code for a bar chart that shows how AI adoption has changed over the past few months.”

“Put this dataset into simple English and point out any strange things.”

In both circumstances, the output was correct and useful. I didn’t have to fix much at all. This proved that the instruction-tuned version really works, even on low-end hardware.

Example of a real prompt and Gemma 3 270M’s output -lightweight yet capable of structured responses.

To test how well Gemma 3 270M handles structured instructions, I asked it to generate Python code for a simple bar chart. Here’s the prompt and the output:

Prompt:

“Make Python code for a bar chart that shows how AI adoption has changed over the past few months”

import matplotlib.pyplot as plt import numpy as np def ai_adoption_bar_chart(months, adoption_rates, title="AI Adoption Trend", xlabel="Month", ylabel="Adoption Rate (%)"): """ Generates a bar chart showing AI adoption rates over time. Args: months (list): A list of strings representing the months. adoption_rates (list): A list of numerical values representing the AI adoption rates for each month. title (str, optional): The title of the chart. Defaults to "AI Adoption Trend". xlabel (str, optional): The label for the x-axis. Defaults to "Month". ylabel (str, optional): The label for the y-axis. Defaults to "Adoption Rate (%)". """ if len(months) != len(adoption_rates): raise ValueError("The number of months and adoption rates must be the same.") # Create the bar chart plt.figure(figsize=(10, 6)) # Adjust figure size for better readability plt.bar(months, adoption_rates, color='skyblue') # Add labels and title plt.xlabel(xlabel) plt.ylabel(ylabel) plt.title(title) # Rotate x-axis labels for better readability if needed plt.xticks(rotation=45, ha="right") # Add grid lines for better visualization plt.grid(axis='y', linestyle='--') # Add value labels on top of the bars (optional) for i, v in enumerate(adoption_rates): plt.text(i, v + 0.5, str(v) + '%', ha='center', va='bottom') # Adjust offset (0.5) as needed # Adjust layout to prevent labels from overlapping plt.tight_layout() # Show the chart plt.show() if __name__ == '__main__': # Example Usage: months = ["Jan", "Feb", "Mar", "Apr", "May", "Jun"] adoption_rates = [15, 18, 25, 32, 40, 45] # Example adoption rates (in percentage) ai_adoption_bar_chart(months, adoption_rates) # Another example with different data and title months2 = ["July", "Aug", "Sep", "Oct", "Nov", "Dec"] adoption_rates2 = [48, 52, 55, 50, 47, 53] ai_adoption_bar_chart(months2, adoption_rates2, title="AI Adoption - Second Half of Year")The generated code ran without major changes and produced the chart as expected, showing that even a lightweight model can handle multi-step coding instructions.

4. Making Changes and Tweaks

I used LoRA to fine-tune the model on a small set of chemical product summaries because I was interested in how it could be used in that field. For a modest dataset, the method only needed 8GB of RAM and a 4GB GPU. The results were much better for my area-Gemma 3 270M learned how to make more relevant, accurate summaries without any extra work.

5. Test for Privacy and Offline Use

I didn’t have to worry about transferring data online because everything ran on my own computer. This was especially comforting when I tried out private product information and AI-generated lab notes. One of Gemma 3 270M’s best features is that you have complete control over your data, and offline execution really drove it home.

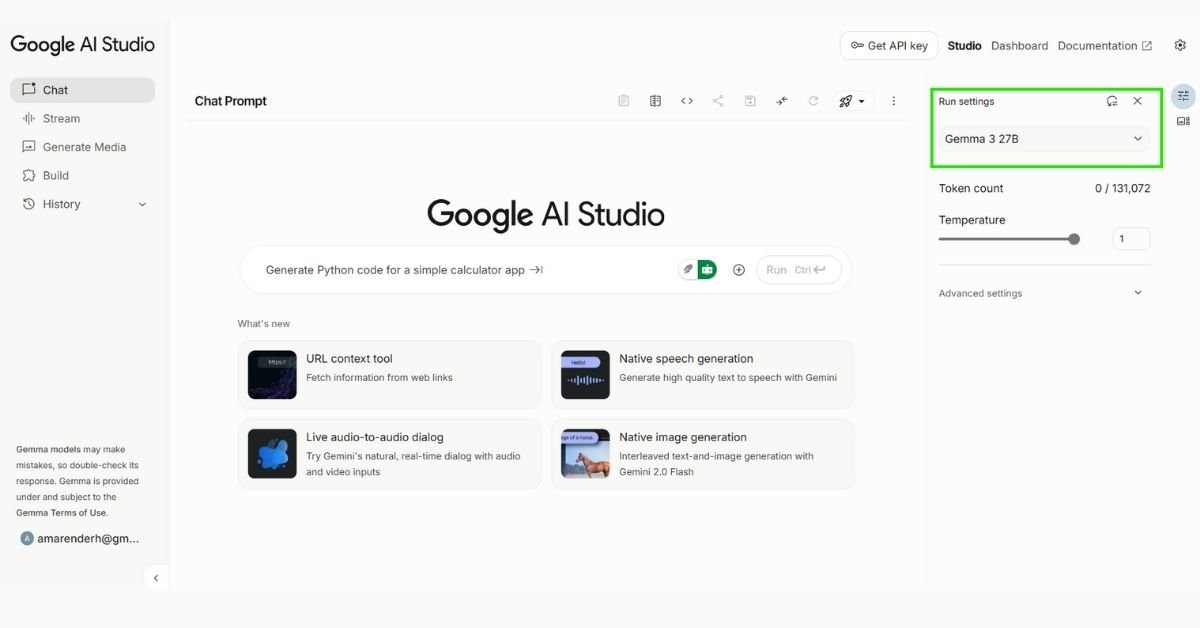

Selecting Gemma 3 270M within the interface – just a few clicks to get started on mobile and desktop.

Results: Important Results and Surprises

Battery Life and Efficiency: In INT4-quantized mode, running 25 talks on my Pixel 9 Pro used up only 0.75% of the battery. It didn’t consume much RAM or CPU on the laptop. The model felt quite light, yet it still worked well.

Ability to do many different things Gemma 3 270M did a surprisingly good job on a wide range of tasks, from composing stories to making summaries and generating Python code.

Following Instructions-The model gave correct answers to structured prompts, which made it suitable for tasks like constructing tables, getting data, or writing summaries of publications.

Surprising Context Handling: Even though the model is modest, it can handle extended contexts of up to 32,000 tokens, which let me work with big documents without having to break them apart.

Challenges and Performance Limits: Most of the time, the model did a great job, but some complex reasoning tasks showed that it wasn’t as good as bigger models. Quantized versions were a little less polished, but the results were still clear and useful.

Fine-Tuning Advantages -Even minor, simple changes made a big difference in the outputs for each domain. Gemma 3 270M showed that you don’t always need big models to get good results in a certain area.

Reflection: What I Learned and an Invitation to Read

After using Gemma 3 270M, I changed the way I think about small, local LLMs. I learned that things like efficiency, privacy, and being able to work offline can be just as important as the size of the model. You may have an AI helper that is really useful on your device without having to use cloud infrastructure.

If you’re trying Gemma 3 270M, here are some tips:

- Start with a little device; even older laptops and smartphones can run it.

- Use quantization wisely. INT4 works well, but unquantized modes are better if you want the best quality.

- Fine-tune for your area-A little data can go a long way.

- Experiment offline-one big benefit is that you have full privacy and control.

- If you want to learn more about AI, check Gemma 3 270M. Try out your chores, see what makes them tick, and let me know how they work for you!

Explore More & Connect

If you enjoyed this hands-on review, you might also like other posts in the AI Tools category

I’d also love to connect and exchange ideas – feel free to reach out on LinkedIn

In short, tiny may be powerful, and local LLMs like Gemma 3 270M show that you don’t always need millions of parameters to produce useful results in the real world.

Last updated on August 19, 2025

Hi, I’m Amarender Akupathni — founder of Amrtech Insights and a tech enthusiast passionate about AI and innovation. With 10+ years in science and R&D, I simplify complex technologies to help others stay ahead in the digital era.